Corruption-Tolerant Gaussian Process Bandit Optimization

Abstract

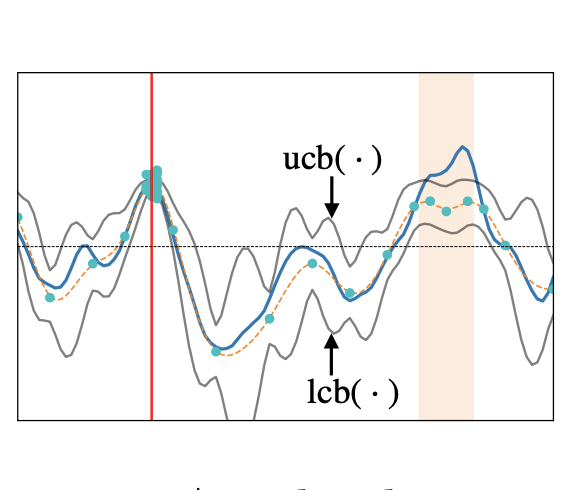

We consider the problem of optimizing an unknown (typically non-convex) function with a bounded norm in some Reproducing Kernel Hilbert Space (RKHS), based on noisy bandit feedback. We consider a novel variant of this problem in which the point evaluations are not only corrupted by random noise, but also adversarial corruptions. We introduce an algorithm Fast-Slow GP-UCB based on Gaussian process methods, randomized selection between two instances labeled fast (but non-robust) and slow (but robust), enlarged confidence bounds, and the principle of optimism under uncertainty. We present a novel theoretical analysis upper bounding the cumulative regret in terms of the corruption level, the time horizon, and the underlying kernel, and we argue that certain dependencies cannot be improved. We observe that distinct algorithmic ideas are required depending on whether one is required to perform well in both the corrupted and non-corrupted settings, and whether the corruption level is known or not.